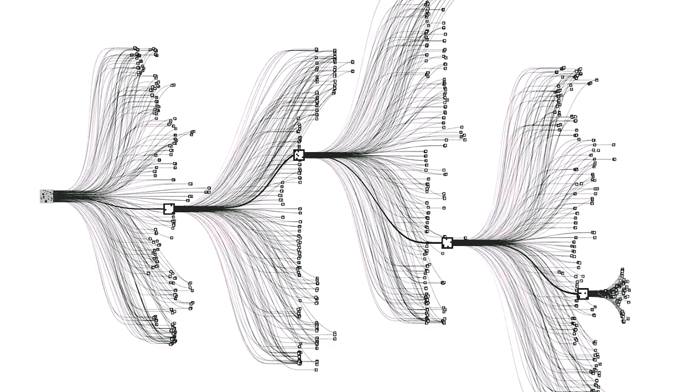

Feature photo: a depiction of the search tree used by AlphaGo, Google’s Go-playing AI program.

The year was 1966. The nascent field of artificial intelligence had begun to attract interest from academics in cognitive and computer science. AI pioneer Marvin Minsky had just founded one of the early meccas of AI, the MIT Artificial Intelligence Lab, several years earlier. The lab boasted several of the world’s leading academics, including John McCarthy, who coined the term “artificial intelligence”.

It was in that year that Joseph Weizenbaum, another professor at MIT, made an innocuous request to the nontechnical staff of the lab: to have a chat on the computer with a psychotherapist by the name of “Doctor”. Nothing seemed amiss; the staff found the Doctor quite sympathetic, and many spent hours talking to it, revealing their personal problems and insecurities. Weizenbaum’s own secretary reportedly asked him to leave the room, so that she and the Doctor could have a private, intimate conversation.

The staff didn’t know, however, that the “Doctor” wasn’t a psychotherapist at all. In fact, it wasn’t even human.

“Doctor” was a computer program called ELIZA that Weizenbaum had created over the previous two years. Weizenbaum chose the domain of psychotherapy because it allowed him to “sidestep the problem of giving the program real-world knowledge”. Instead, he wrote a set of simple rules that would reverse questions directed at the program, or prompt the patient to continue speaking. For example, when asked “What is your favourite kind of ice cream?” ELIZA might reply “Why do you mention ice cream,” or simply “Why does that question interest you?”

Weizenbaum was shocked that people so easily divulged their personal information to such a simple program. What particularly disgusted him was that the Doctor’s patients believed the program actually understood their problems. Disturbed by the implications of his project, and perceiving his program to be a threat, Weizenbaum tried to shut ELIZA down. He later wrote a book about the experience, warning against the “reductionist onslaught” of research in computer science and artificial intelligence.

With the ELIZA project largely abandoned, research in artificial intelligence – the field concerned with building systems that act intelligently and independently from humans – continued without a primary focus on large-scale applications. The public conception of AI was relegated to its depiction in science fiction movies, and aside from the breakthrough that saw the Deep Blue program beat Gary Kasparov at chess in 1997, there was little popular discussion about the state of AI research and the direction it was headed in.

But the last several years have seen a resurgence of AI into the public sphere: the number of news articles written on AI has skyrocketed, investments in AI start-ups from venture capitalists have soared, and the White House has even planned a series of workshops entitled “Preparing for the Future of Artificial Intelligence.” Stephen Hawking, Elon Musk, Bill Gates, and other notable figures have started to raise concerns about the future of AI research and the corresponding existential risk. People are seriously pondering the future of humanity as one of many species with intelligence. In short, the long-held view of AI as an arcane technology is beginning to erode.

Among those who discuss the rapid progress of artificial intelligence, a recurring question arises: what would it be like for artificial intelligence to play an integral role in our daily lives?

It is of course an interesting question: contemplating the impact of a world filled with artificial sentient agents is intellectually enticing. Yet a fantasy world where AI is everywhere is not just a tale of the distant future — in many ways, it is the world we currently live in.

While recent advances in AI have been well covered by the media – from personal assistants like Microsoft’s Cortana or Apple’s Siri, to breakthroughs like the Watson Jeopardy! program and Google’s AlphaGo – this is just the tip of the iceberg. It’s easy to underestimate how ubiquitous AI has become in our society; huge tech companies are not just sinking massive funds into cutting-edge research, they are actually deploying this technology into countless products that you use almost every minute.

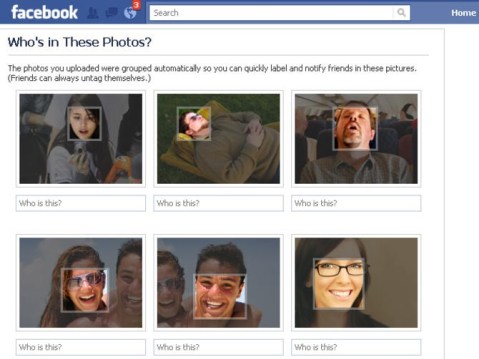

Hard to believe? Consider this: every time you search something on Google, you invoke the use of algorithms that are now dependent on AI. When Amazon or Netflix recommend a product for you to buy or a movie for you to watch, they use a form of AI. Every time you translate something on the web, use speech-to-text or voice recognition, or get tag suggestions for a photo you upload to Facebook, you witness the use of AI and machine learning. Even your passive consumption of e-mail and web advertisements are optimized behind-the-scenes by AI.

It’s not just web services and technology companies making use of AI. Hospitals use AI to detect tumors from medical images, or to predict the onset of seizures using EEG signals. Banks use AI to detect fraud. Any company can now see what users think about their products in real time on Twitter using advances in natural language processing; these companies are also beginning to automate their customer service and marketing departments by outsourcing to AI startups. Today, high-frequency AI traders account for more than half of equity shares traded on US markets.

If these products don’t seem like AI to you, that’s probably because there is a discrepancy between your conception of AI as disturbingly futuristic and the comfort with which you have adopted its technological by-products. As MIT’s John McCarthy once noted: “As soon as it works, no one calls it AI anymore.”

So, how did most of the online services you cherish come to rely on previously esoteric technology? The sudden surge in AI can largely be attributed to three factors.

The first is computing power: the inexorable progress of Moore’s law has seen us double the number of transistors per square inch every two years. A modern calculator is far more powerful than the computer that took Apollo to the moon. This added computation ability has been a huge boost to the development of AI systems.

The second factor is the access to large amounts of data: in an age where people are beginning to conduct much of their daily life online, the amount of data available is simply staggering. A 2013 report by SINTEF estimated that 90% of all information in the world had been created in the last two years, a rate which is nearly doubling every year and a half.

What does data have to do with artificial intelligence? The fact is that huge datasets, by themselves, aren’t particularly useful; they are far too large to be analyzed manually by humans. The real power of big data comes from the machines we build to analyze patterns in them – in other words, machines that learn from data to make useful predictions about the real world. It follows that the third factor contributing to the recent surge in AI has come from advances in the construction of these machine learning algorithms.

This approach is markedly different from much of the research that was conducted in the early days of the field. Rule-based programs like ELIZA had no way of learning about the world around them. Other research focused on search algorithms or formal logic, which do not require learning. And while there were some significant developments in learning algorithms and theory in the 1960s through 90s, learning wasn’t universally accepted as a fundamental component of AI.

This is the most important realization from the last 50 years of AI research: much like humans learn to use sensory information in the form of sound or sight, truly intelligent machines need to learn from the data available to them.

One particularly promising approach to machine learning and AI that has exploded in popularity is deep learning. Although the basic techniques were invented in the 1960s through 80s, they were largely abandoned during the AI winter of the subsequent decades[i]. But deep learning has seen an incredible revival; it is the driving force behind the current surge in AI applications, and has consequently seen huge investments from tech giants Google, Facebook, Microsoft and Baidu. Deep learning is also one of the major components behind recent AI breakthroughs such as AlphaGo.

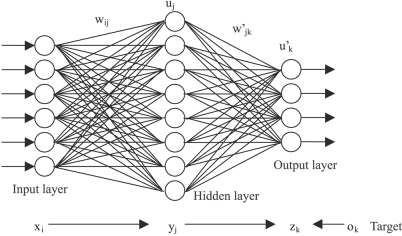

So how does it work? Deep learning is founded on algorithms called artificial neural networks. The idea is simple: analogous to how neurons in the brain are connected together to form a large network, we can think of building an artificial neuron that performs simple calculations. These artificial neurons are stacked together in multiple layers to form a neural network, which is trained to perform a task such as object recognition in an image.

Despite what you might read in the popular press, neural networks do not actually attempt to copy the brain. Rather, they are brain inspired; the inner workings of neural network training algorithms seem to be fundamentally different from the processes that make the brain tick[ii].

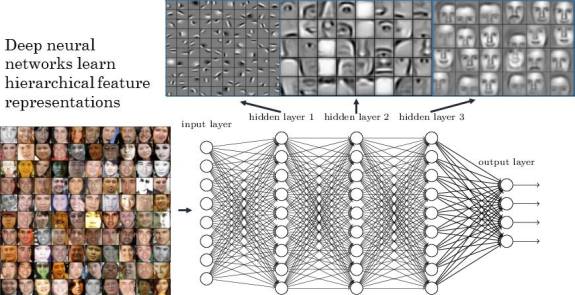

There are several theories as to why neural networks have outperformed other recent AI methods, but a leading hypothesis is that they learn representations of the world that are similar to what humans learn. Think of it this way: what do you see when you look at a chair? Of course you see the chair in its entirety, but without conscious effort you can see the parts that make up the chair: the legs, the seat, and the back. These in turn are composed of combinations of edges, corners, and curves. Your brain automatically figures out a hierarchy of features that are represented in your mind, which you use to interact with the world. What we’ve discovered is that deep networks also learn layers of representations. Lower layers in a neural network represent basic features such as edges and textures, while higher layers in the network are free to represent more complex features like the legs of a chair or wheels of a car. It seems that neural networks are structured in such a way as to make them learn better from data in the real world[iii].

All of this avoids a fundamental question: how do you get a machine to learn about the world?

Unfortunately, the answer is not so simple; entire textbooks are devoted to this topic. In the case of neural networks, the output of the network is controlled by a huge number of tuning knobs called parameters. At the start of the life of the network, the knobs are set at random, and the output of the network is almost always going to be wrong. But the network undergoes a training process, where it is presented with a series of inputs and the correct outputs, and it figures out how to tweak its internal knobs to make it more likely to give the correct output the next time it is presented an input. The algorithm for doing this tweaking is called backpropagation – the force that drives these networks to learn representations of the world.

Modern neural networks can contain millions of parameters, and can take a long time to train, but the end result is often worth it: deep neural networks have achieved unprecedented results on a wide range of tasks such as object recognition, where deep networks have attained nearly human-level accuracy. They’ve also achieved state-of-the-art performance in language translation, speech recognition, text parsing and other fields. Deep neural networks can now automatically derive captions of images, analyze massive amounts of genomic data, generate realistic images that have never been seen before, and even produce novel works of art.

Of course, there are significant challenges ahead before we create an AI with human-level abilities across every task. Neural networks learn much less efficiently than the human brain, which is why massive datasets are required to train them. Deep nets also struggle to learn when there are very few labels for the available data, a domain called semi-supervised or unsupervised learning. Since much of human learning is done with few explicit labels, efficient unsupervised learning is seen as one of the holy grails of modern deep learning.

We live in a world of technology driven by AI that is fuelled by furiously paced research at huge companies like Google, Facebook and Microsoft, and by academic institutions like Stanford, Carnegie Mellon, and the University of Montreal[iv]. The rate of technological progress increases every year, and it shows no sign of slowing down; indeed, some experts argue that this rate is exponential. So while artificial intelligence has already pervaded many aspects of our lives, it may not be long before AI transforms the world into something truly unrecognizable to the people of today.

[i] In fact, one of the primary reasons for the initial abandonment of these techniques was a 1969 book entitled “Perceptrons” by MIT professor Marvin Minsky.

[ii] Although leading AI researchers Yoshua Bengio, Geoff Hinton, and others are attempting to reconcile neural network training algorithms with neuroscience.

[iii] In technical terms, when using neural networks we are placing a strong prior on the space of possible learned functions, in favour of functions that have deep, compositional feature representations. These priors help combat what in machine learning is called the ‘curse of dimensionality’.

[iv] The University of Montreal is (arguably) the best academic institution for deep learning in the world.

Ryan Lowe is a PhD student in machine learning at McGill University. His current research focuses on deep learning applied to building dialogue models. His other interests include reinforcement learning, causal models, and biologically plausible AI. See his website for more information about his research and other activities.